We can say that the Era of AI is all time high. AI is entering every aspect of your life. To increase your productivity, you can add the new Microsoft Copilot, an AI assitant to your Microsoft office applications. The key question on users’ minds is, “Is Microsoft Copilot ethical or not?”

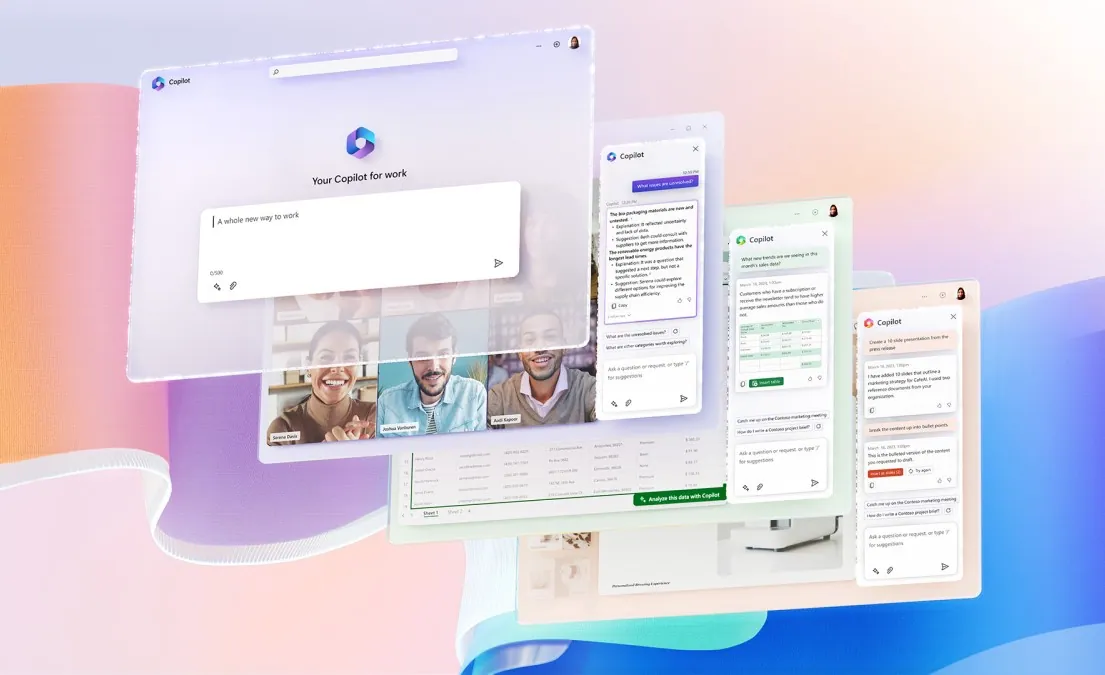

Microsoft recently launched a new feature for all its Microsoft apps including the security app. This new feature is the Microsoft Copilot. It is an AI that can be used to help you in the organization and generation of data on Microsoft apps. Although the features of this AI are cool, it will reduce the workload and improve productivity.

The use of Microsoft Copilot in the Office apps will be fun. You can work across apps and create docs and presentations in just a few seconds. Try out the Microsoft Copilot without worrying about Microsoft Copilot ethical concerns.

How Ethical Is Microsoft Copilot?

The advancement in AI technology and the use of Language Models in AI has made it possible for AI to work efficiently and effectively in any environment. Microsoft recently released its new feature for the Microsoft 365 apps which is the Microsoft Copilot.

It is the integration of AI in the Microsoft 365 apps which will increase the productivity of these apps to a greater extent. The question arises Is Microsoft Copilot Ethical? You will ask this question mainly because all the Microsoft 365 apps are used in business and will it be safe to rely on the data generated by the Copilot? Microsoft is claiming that the Copilot will be your most powerful productivity tool on the planet but you have to cross check the data each and every time.

Also, the ideas that are generated by the Microsoft Copilot are not necessarily sufficient, you can go through them and add your own thoughts and observations that will take your work to the next level.

Ethical Consideration That Should Be Taken Care By Microsoft Copilot

If you are also thinking about is Microsoft Copilot Ethical, there are different things that should be taken care of while trying the Microsoft Copilot. As all artificial intelligence is in its infancy, we can expect some issues at the moment.

Data Privacy and User Consent

The first important thing that should be an ethical consideration is data privacy. It should be made clear by Microsoft how the Copilot stores the data of the users and if it provides any option where we can restrict any such data from being recorded. It is very important for organizations that are using Office 365 apps for their main usage.

Transparency and Accountability

Another important thing that should be considered while using the Microsoft Copilot is to look for the transparency and accountability of Microsoft in this AI. It should be made clear by Microsoft how much data is collected by the Copilot and where is it used and stored. If there is any breach in the data, how will the organization’s data be secured?

What Are The Potential Risks And Threats Of Microsoft Copilot?

With the official announcement made about the Microsoft Copilot, it will be soon integrated into the Microsoft 365 apps. Although there are no Microsoft Copilot ethical concerns still there are different potential risks and threats that should be considered while trying out the Microsoft Copilot.

1. Can Be Used To Spread Misinformation

As Microsoft Copilot is an AI, some users can take advantage of this AI to spread misinformation. This can be dangerous to the users who are using the AI and it will overall affect the AI world in a negative way.

2. Can Be Used To Generate Spam

As we know that the Micorosft Copilot is capable of generating emails and messages, and it can be used to generate Spam as well. Microsoft should provide some privacy options so that it won’t be used for the wrong purposes.

3. Can Be Used To Create Hate Speech

Microsoft Copilot can be used to Generate docs and emails, so it can also be used to create hate speech. Microsoft should also look into the different ways to avoid a generation of hate speech or any such thing that can harm people’s feelings.

Does Microsoft Copilot Obtain User Consent For Data Collection?

As we know that there are no Microsoft Copilot Ethical concerns but still when we install any Microsoft app on our system, we have to agree to its terms and conditions. In a similar way, we will have to agree to the terms and conditions of the Microsoft Copilot. This way we will be providing consent for data collection without knowing if Microsoft Copilot is using the user data for bot training, hence, storing it. But we will have to wait till the Microsoft Copilot is integrated with the Microsoft and then we will find out if there are any options available for restricting the data from being collected. Although in the announcements Microsoft has made it clear that the users can give access to the Copilot to access your content and revoke the access too.

Is Microsoft Copilot Transparent About Its Data Usage?

Microsoft confirms that it doesn’t use customer data to train the LLMs but the experts are saying we will have to wait and find out how Microsoft analyzes our data and then how it reacts when it has collected our data. It is considered a risk to give access to the Copilot for all the Microsoft apps. But we can trust the Microsoft company for securing our data and defying all the concerns regarding the Microsoft Copilot ethical issues.

Does Microsoft Copilot Prioritize User Privacy?

Yes, Microsoft Copilot is giving priority to user privacy and it claims that it does not collect any data from the users but you have to remember, if you want to use the Copilot in any Microsoft 365 app, you will have to give it access to your files in the real-time.

Conclusion

The Microsoft Copilot Ethical concerns are only for the organizations which rely on the Microsoft apps for their work. For students and other common users, they can try out the Microsoft Copilot with ease without having to worry about any ethical issues.